Kindness and Code: The Algorithmic Contagion

Why “Fairness Debt” Is the Systemic Risk CROs Cannot Book Away in 2026

The Algorithmic Contagion: Why “Fairness Debt” Is the Systemic Risk CROs Cannot Book Away in 2026

By Carla Canino, Founder & CEO of Kindlee

As of January 2026, financial institutions sit on a paradox: AI now underpins trading, credit, and customer channels—yet almost no board can answer a simple question: where does fairness risk sit on our balance sheet today?

AI agents increasingly intermediate customer journeys in onboarding, lending, collections, and servicing, but the cumulative impact of their decisions on vulnerable and underserved segments remains largely unmeasured.

We call this unmeasured exposure Fairness Debt: the latent, compounding liability created when automated systems make thousands of opaque micro-decisions about who is seen, who is served, and who is silently excluded. Fairness Debt accumulates silently, compounds with scale, and crystallizes only when regulators, customers, or markets force recognition. In 2026, it is no longer a reputational issue at the margins; it is a form of systemic risk cutting across conduct, model, and operational risk frameworks.

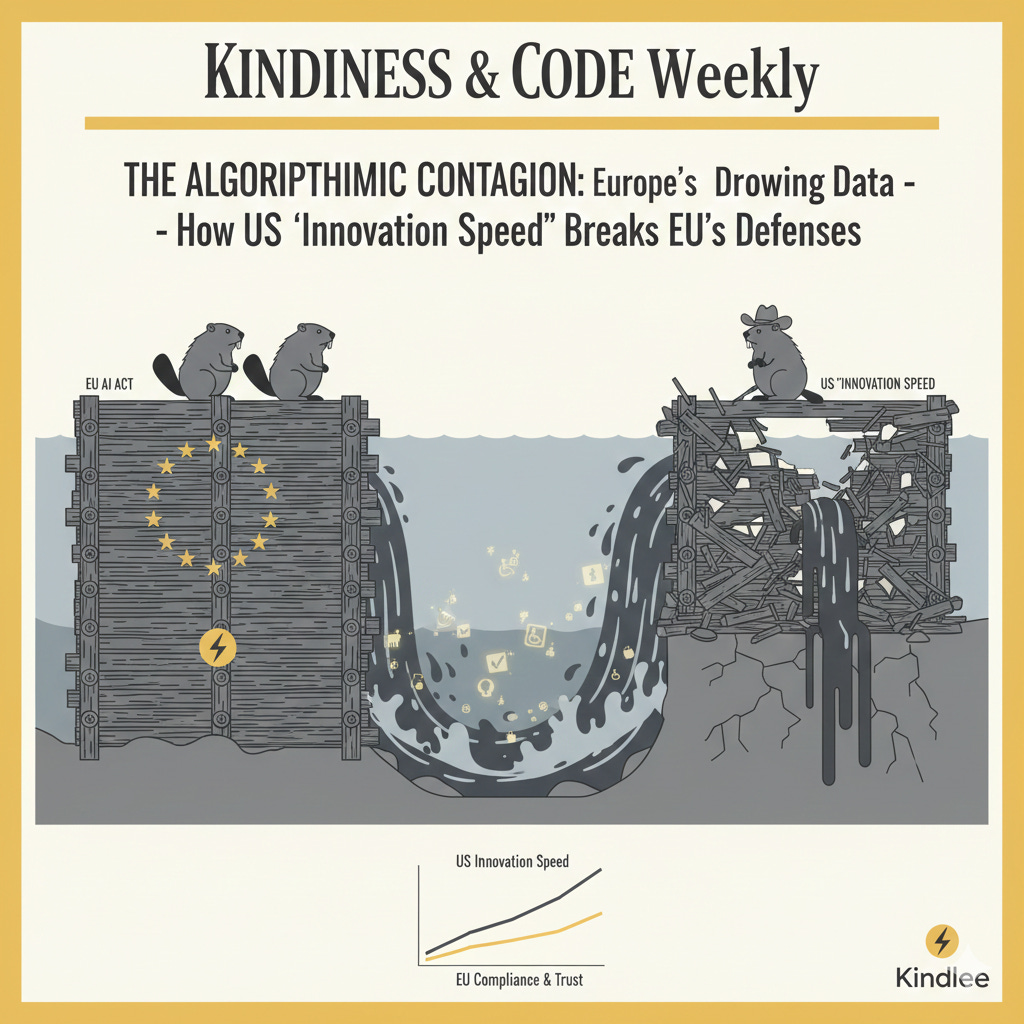

1. The geopolitical liability: importing bias, exporting sovereignty

The European fintech ecosystem has entered a Dependency Trap. Under the EU AI Act, many AI systems used in regulated financial decisioning—including credit assessment, KYC, transaction monitoring, and customer profiling—are classified as high-risk, subject to stringent requirements for transparency, monitoring, human oversight, and governance. Serious breaches may expose firms to significant turnover-based fines and remediation obligations.

Yet the “brains” of European finance are increasingly outsourced to third-country foundation model providers whose design trade-offs are neither fully transparent nor aligned with EU-level expectations.

When a non-EU model provider optimizes for innovation speed over contextual robustness, that decision propagates instantly into every European neobank, payment processor, or lender consuming those models via API. This is not a neutral technology choice. It is a form of regulatory leverage, where imported model and conduct risk is supported by domestic capital, compliance infrastructure, and reputational buffers.

In practice, European institutions may be held to strict, outcome-oriented standards under the AI Act and existing consumer protection regimes while operating on black-box systems that never meaningfully recognized the intersectional needs of an EU citizen with a disability, a migrant background, or a non-standard credit profile. Supervisors are increasingly explicit: intent is not a shield. Measurable outcomes and effective controls are what matter.

2. The agentic economy: when AI fails to represent the human

The “user” of 2026 is no longer only a person; it is increasingly an agentic proxy. Financial services are moving toward AI-to-AI interactions, where a customer’s personal agent negotiates with a bank’s credit or servicing agent, and frontline contact is mediated by large language model–driven systems rather than human staff.

By 2025, a growing share of Tier 1 financial institutions had deployed AI agents in at least one operational process, with customer service, fraud detection, and risk management leading adoption.

This shift creates a crisis of representation: whose behavior, language, and constraints do these agents actually model and prioritize?

When an AI stack is optimized around a dominant digital dialect, device profile, or behavioral pattern, it systematically deprioritizes agents representing neurodivergent customers, individuals with cognitive needs, or those whose financial lives fall outside the training distribution.

This is Algorithmic Redlining 2.0—discrimination in effect rather than intent, driven not by explicit exclusion rules but by optimization surfaces that favor the already well-represented.

Interdependencies amplify the risk. Bias or blind spots in a KYC provider’s model can cascade into transaction monitoring, credit scoring, and personalization engines, generating correlated failure modes across the stack rather than isolated glitches. In Kindlee’s production evaluations across neobanks and payment providers, the majority of AI “failures” are not hard crashes but soft denials: the system fails to understand or situate the user’s context appropriately, forcing escalation to a human agent and eroding the very ROI that justified automation in the first place (Kindlee Cost of Trust Index, 2025).

3. Fairness Debt: from metaphor to operational risk metric

For a Chief Risk Officer or Head of Product, the core question is no longer “Does it work?” but “How does it drift—and for whom?”

Traditional Model Risk Management (MRM) frameworks were designed for static or slowly changing models, not for continuously learning, conversational, or agentic systems interacting with adversarial environments and evolving data.

Within this landscape, Fairness Debt can be defined operationally as:

The cumulative, unmeasured disparity in outcomes experienced by protected or vulnerable segments across AI-mediated decisions, controlling for relevant risk factors.

Each biased or context-blind decision taken by an agent in production adds to this debt, which eventually manifests as regulatory exposure, operational friction, and value destruction.

Its impacts are measurable across classic risk categories:

Conduct risk: systematically disadvantageous outcomes in onboarding, pricing, or servicing—even without explicit intent.

Model risk: unmonitored drift across segments where inputs, language, or behavioral signals differ from the training distribution.

Operational risk: escalation-driven headcount growth, complaints, remediation costs, and degraded customer experience.

To move Fairness Debt from metaphor to metric, boards should expect a Fairness Control Loop as disciplined as capital or liquidity management:

Identify

Map AI-mediated decisions in high-impact journeys (onboarding, SME lending, collections, vulnerability support).

Classify systems under the AI Act and relevant conduct and anti-discrimination regimes.

Quantify

Measure approval, pricing, friction, escalation, and outcomes across segments, controlling for risk-relevant variables.

Establish a Cost of Trust metric capturing operational, regulatory, and revenue impact over time.

Remediate

Implement bias mitigation and contextual safeguards where evidence shows systematically poorer outcomes.

Design explainable, auditable escalation and override mechanisms.

Report

Integrate fairness and Cost of Trust metrics into model risk committees and board reporting.

Maintain regulator-ready documentation linking observed behavior to controls and decisions.

Board-ready KPIs include:

Share of high-risk AI decisions covered by fairness and drift monitoring

Soft denial and escalation rates (segmented where legally appropriate)

Differential approval or offer rates after controlling for credit risk

Time-to-remediation for identified disparities

Fairness Debt behaves like technical debt with one crucial difference: it scales non-linearly with volume and complexity and is increasingly visible to supervisors, auditors, and the public.

4. The 600-day trust imperative

Leading strategy houses describe the next 18–24 months as the decisive window for AI-centric transformation in financial services. For most institutions, this aligns almost exactly with their next two supervisory cycles.

Interpreting “AI-centric” narrowly as “model-rich” is a strategy for failure in a regulatory environment moving toward outcome-based supervision. Supervisors are converging on three expectations: demonstrable control, traceable decision-making, and measurable management of adverse consumer impact.

AI-centric without trust-centric is simply a modern form of concentration risk.

The winners of 2026 will not be those with the fastest inference speeds, but those that can:

Quantify fairness and Cost of Trust in real time

Demonstrate substantive compliance with the AI Act’s intent

Show customers—especially the historically underserved—that agentic systems expand, rather than narrow, access

5. Kindlee’s role—and the board’s next question

Trust is now the critical bottleneck of the AI economy. A financial institution that cannot express, monitor, and govern the effectiveness of its models in trust terms is not managing innovation; it is managing contagion.

Kindlee is built as responsible AI infrastructure for financial services, providing:

Continuous, production-grade fairness and impact monitoring across onboarding, credit, and servicing

A quantified Cost of Trust benchmark translating AI behavior into board- and regulator-ready metrics

EU-grade evidence packages aligned with the AI Act and existing MRM, GRC, and operational risk frameworks

The board-level question in 2026 is no longer “What is the cost of AI?” but:

“What is the Cost of Trust in our AI systems—and how are we managing it over time?”

Paying that cost proactively creates durable advantage. Paying it later—through churn, fines, and brand damage—is simply another form of systemic loss.

References & Sources (indicative)

European Union, AI Act (final text and supervisory guidance, 2024–2025)

European Banking Authority (EBA), Guidelines on ML & Model Risk

Financial Stability Board (FSB), AI and Financial Stability

Reuters, AI governance, fairness, and financial regulation coverage

Wharton AI & Analytics Initiative

Harvard Law School Forum on Corporate Governance

Kindlee, Cost of Trust Index, 2025 (multi-institution production evaluations)